Avoid causing harm. It is part of the classical Hippocratic oath. It should also be part of the risk management specialist’s promise. Nevertheless, the use of risk management methods that do more harm than good has become widespread. Fortunately, we are seeing increasing support for quantitative methods with evidence of effectiveness, but still, there is a long way to go before these models become widely used.

Today, most companies use simple two-dimensional assessments of risk like verbal scales and red-yellow-green communication. These methods, also known as qualitative methods, have gained their wide use through authorities and standardization organisations, just as they are used by many management consultants.

These methods use “green-yellow-red” or “high-medium-low” rating scales for both probability and consequence, after which risks are charted on a two-dimensional map (called a risk matrix or a “heat-map”). Sometimes, a point scale (e.g., 1-3 or 1-5) is used to rate likelihood and consequence, then the two values can be multiplied together to get a “risk score”.

In this article, we will briefly discuss some of the reasons why these methods cause analytical placebo and can, in themselves, introduce risk. By doing so, we will argue why one should look in the direction of IT risk management methods that have been used since the 1950s for everything of importance like the construction of bridges and high-rise buildings, the aircraft industry, financial instruments, etc.

We will look at three problems with risk matrices and verbal scales:

- Range compression

- Human and psychological problems

- The illusion of independence

Range Compression in Risk matrices

The first problem stems from the fact that many scoring methods compress a large outcome space into some fixed steps (typically 3 or 5 steps).

Let’s take an example:

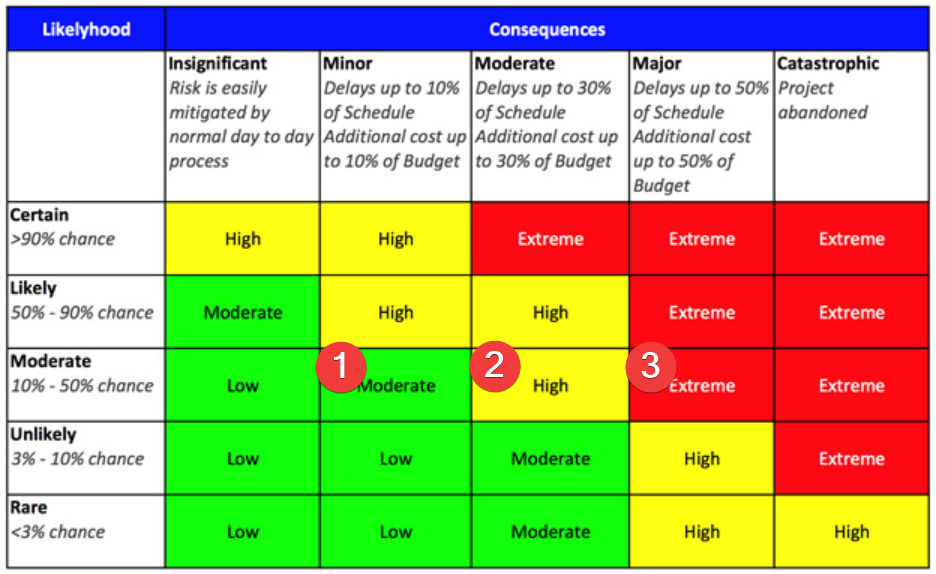

DTU has published the article “Concept of Risk Quantification and Methods used in Project Management”. The article contains references to, among other things, PMBOK, ISO31000 and PRINCE2 and provides an example of a risk matrix that looks like this:

For this example, we will go over the three cells marked with 1, 2 and 3. Also, we envision a project with a budget of DKK 1,000,000.

- Moderate Risk, Green: The cell covers a potential loss between DKK 1,000 – DKK 50,000 (10% X 1% of budget to 50% x 10% of budget)

- High risk, Yellow: The cell covers a potential loss between DKK 12,100 – DKK 150,000 (10% x 11% of budget – 50% x 30% of budget)

- Extreme Risk, Red: The cell covers a potential loss between DKK 31,000 – DKK 250,000 (10% x 31% of budget – 50% x 50% of budget)

If we take an imaginary loss of DKK 40,000, it could be placed in all three cells and thus become both red, yellow, and green. You pick!

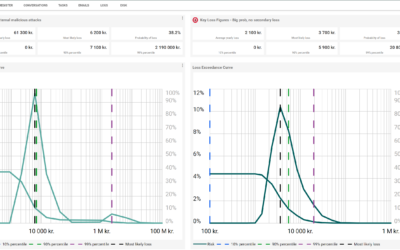

Attacks from external malicious actors (cyber-attacks) are, for the same reasons, very difficult to place on a scale. Historical events show that they can be very cheap (if we are talking about a “hack”, where a very smallpart of the infrastructure is affected) or extremely expensive (if it is a NotPetya-like attack that takes down the entire company’s digital platform for months). How would one represent this very large outcome space in a matrix?

It is clear that this model has insufficient resolution to illustrate reality. The model is far too pixelated and as it has been said: “garbage times garbage is the square of garbage”.

Human and psychological problems in the use of scales

Let’s also touch on two areas related to the human brain in connection with the application of these models, the illusion of uniform intervals and ambiguity and individual interpretation.

The illusion of uniform intervals: When using a scale such as 1-5, there is an intuitive notion that the meaning behind the individual steps of the scale corresponds to the meaning of the numbers.

We will use an example where the security of an IT system is scored from 1-5. This would mean that a system that scores 2 is half as secure as a system that scores 4. A system that scores 5 is five times as secure as a system that scores 1. That is a fair assumption based on these methods; however, the reality is quite the opposite. If you evaluate the scales from a measurement-based approach, you discover that this regularity is not the case. We find that an IT system that scores 2 does not have half as many loss events as a system that scores 1. Reality contains a complexity that can not be simplified like that.

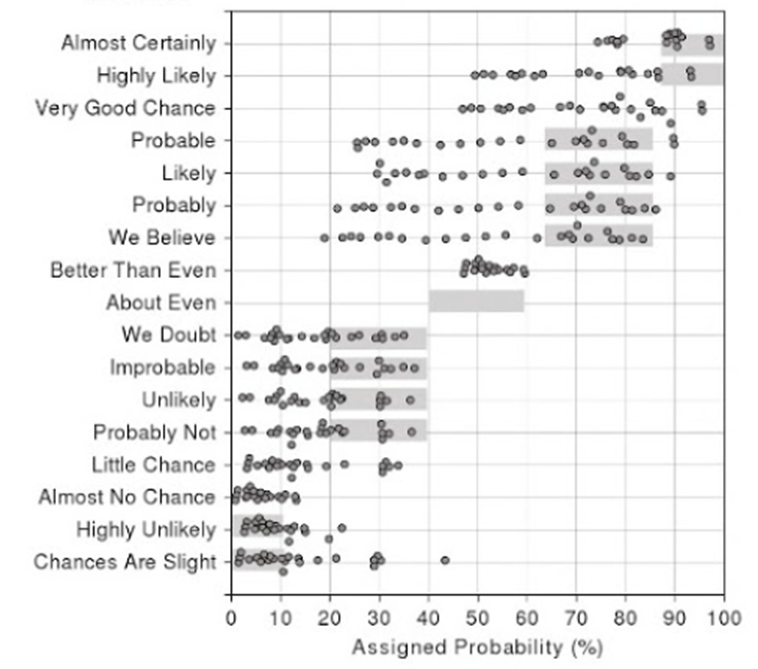

Ambiguity and Individual interpretations: In a study, 23 NATO officers were asked a number of statements about probability. Unsurprisingly, large fluctuations were found here in how the individual statements are interpreted. The figure below shows the large spread. See, for example the statement “Probably”. Here the interpretation ranges from a probability of 25%-90%.

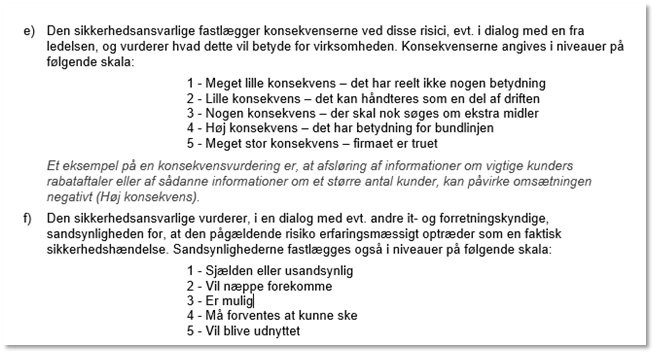

We also came across a template for risk assessments from the Danish Agency for Digitalisation with a 5×5 structure. Here is an excerpt from the guide (it’s only in Danish)

It is a very good example of the problem of ambiguity and individual interpretation. We do not believe that these scales provide any basis for assessing the difference between e.g., a 1 and 2. If anyone is able to define the difference between “Very small” and “Small”, please give us a call. Because we’re not able to.

The illusion of independence

Finally, there is the issue of correlations between different risks. In working with the individual risks, their interdependence is often forgotten. Two risks that are each rated medium-consequence, medium-likelihood may have a higher overall risk if they occur simultaneously.

The next time you see a risk matrix with a number of ranked risks, try asking the question: “are any of the individual risks inter-dependent? If they are how is this represented?”. Listen to the silence and draw your own conclusions.

Summary

The problem with the risk matrix is that it feels scientific. It promises a quick, simple solution to a complicated problem without taking up too much time and resources with challenging calculations.

Before you had no idea about risks. But now you’ve put them in neat little boxes and given them grades. You “understand and manage your risks”; but it is an illusion. Not only is there no evidence that risk matrices work. In fact, there is evidence to the contrary. The above arguments should be sufficient to establish it.

Actively using the matrix and making decisions with this tool absorbs time, money and effort for no benefit at all. We would go so far as to say that they should not be used for anything of importance. IT is important today and should therefore use methods for IT risk management that are based on sufficient evidence. Methods that have been used since the 50s for the construction of bridges and high-rise buildings, the aircraft industry, financial instruments, etc.

For a more detailed examination of this read the book “The Failure of Risk Management: Why It’s Broken and How to Fix It, by Douglas W. Hubbard

When you criticize something, you must also suggest something better. Of course. We intend to do this in our work and with the help of several future articles.

Stay tuned.